Spec Driven Development as a Standard

Spec-Driven Development (SDD) as a team standard. Not a methodology from a conference talk, but a response to a pattern we kept seeing in our own work

The feature was supposed to take two weeks. The ticket had a title, three bullet points, and a Slack thread attached for "context." A developer picked it up, made reasonable assumptions, and started building. An AI assistant helped generate the boilerplate. Things moved fast — right up until the PR review, when someone asked why the data model didn't account for multi-tenancy.

It didn't, because no one had said it needed to.

The feature shipped three weeks late, half-wrong, and with a refactor already scheduled. Sound familiar? It should. This isn't a story about a bad developer or a bad AI tool. It's a story about a bad spec — which is to say, no real spec at all.

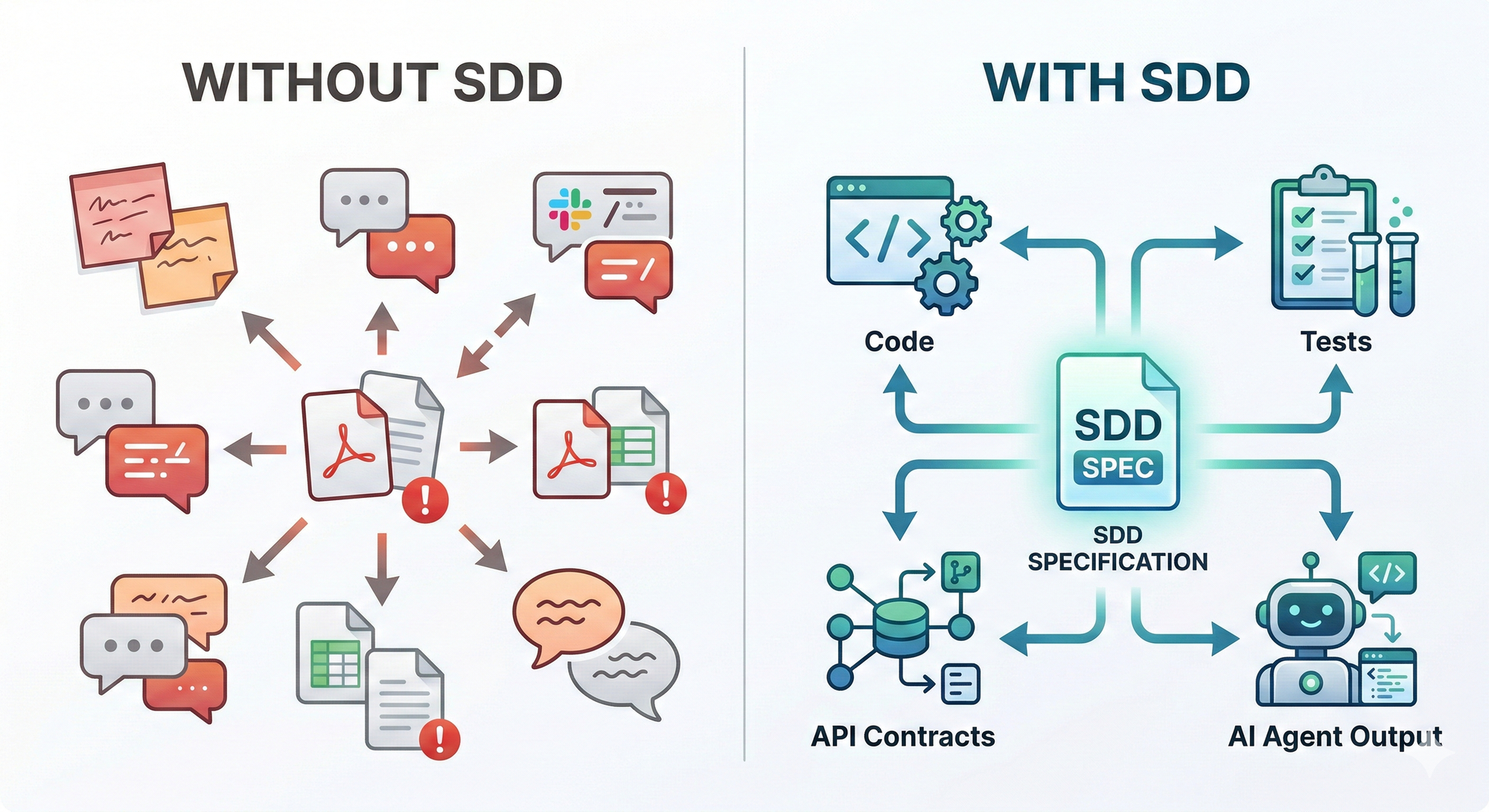

Most development failures aren't technical. They're definitional. The team built exactly what was described — it just wasn't what anyone actually needed. And here's the uncomfortable thing AI-assisted development exposed: we were never as precise as we thought we were. When a human developer hit an ambiguous requirement, they'd ask a question, make a judgment call, or absorb the rework quietly.

When an AI assistant hits the same ambiguity, it just builds something. Confidently. Completely. Based on whatever it could infer. The speed is real. So is the blast radius when the foundation is wrong. Vibing creates side-effects.

The problem was never that our tools were too slow. The problem was that our specifications were never good enough to hand off to anyone — human or machine.

That realization pushed us toward Spec-Driven Development (SDD) as a team standard. Not as a methodology imported from a conference talk, but as a response to a pattern we kept seeing in our own work: requirements that lived in people's heads, PRs that became the first real design conversation, and AI outputs that were technically impressive and contextually wrong.

What follows is an honest account of what SDD actually looks like in practice, where it creates friction on purpose, and what the data showed when we made it non-negotiable.

What Spec-Driven Development Actually Means

The spec isn't the thing you write before the real work starts. The spec is the work.

SDD repositions the specification as the primary artifact of development. Code is the output. The spec is the source of truth. What gets built, for whom, under what constraints, and what "done" actually looks like — all of that lives in the spec before anyone opens an IDE.

Most teams already write something before they build — a Jira ticket, a Confluence page, a Slack thread that someone screenshots and pins. That's not a spec. A spec is a structured document that defines:

- Purpose — the problem being solved and for whom

- Functional requirements and edge cases — not just the happy path, but what happens when things go sideways

- Data models and API contracts — the shape of the system, agreed upon before implementation begins

- Acceptance criteria — a clear, testable definition of done

In an AI-first workflow, this structure becomes even more critical. The spec becomes the prompt. Feed a well-formed spec to an AI coding agent and you get coherent, reviewable output that maps back to a shared understanding. Feed it a vague brief and you get drift — code that technically runs but doesn't quite solve the right problem, reviewed by engineers who aren't sure what "right" even means.

Think of the spec as a contract — between product and engineering, between human and AI, between this sprint and the next developer who touches the codebase.

In practice, SDD means three things happen in sequence:

- Behavior is defined before implementation — API contracts, data schemas, and acceptance criteria are locked before a single function is written

- The spec becomes the test harness — automated tests are generated from or validated against the specification, not written independently after the fact

- Spec changes are the primary change event — if a requirement shifts, the spec changes first, and everything downstream follows

The discipline shows up in the numbers. Teams we've worked with that adopted SDD as a standard — not a project-by-project experiment, but a firm engineering norm — typically see 30–50% fewer late-stage defects and meaningfully shorter QA cycles. Ambiguity gets resolved in the document, not in the debugger.

Why We Standardized — The Before and After

The decision to standardize wasn't philosophical. It was a response to a concrete, measurable problem: as AI tooling moved from experiment to infrastructure, output quality variance traced almost perfectly back to specification quality variance. Garbage in, garbage out — but the garbage was invisible because it lived in a dozen different places.

The before picture was fragmented in ways that compounded:

- Agent configurations scattered across team Slack channels, personal repos, and Notion pages

- Generating specs existed in three different versions and too across four active projects

- Onboarding a new developer to an AI-assisted workflow meant a week of archaeology — not learning the system, but finding it

- Prompt patterns as tribal knowledge, living in the heads of whoever had been on the project longest

That last one is the quiet killer. Tribal knowledge feels efficient until someone leaves, a project scales, or a new team member needs to ship something in week two.

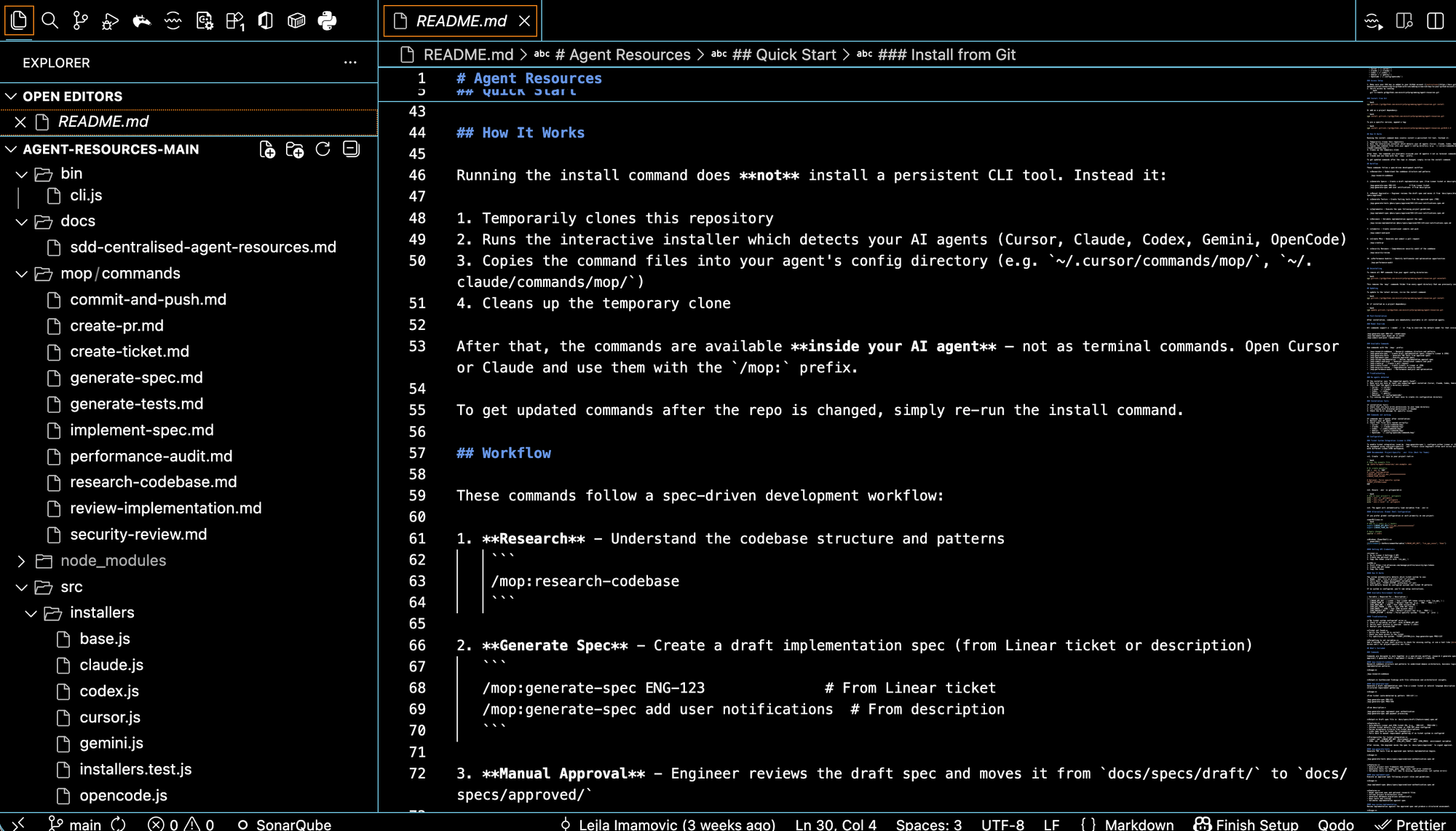

The shift was structural, not ceremonial. We centralized everything into a single agent-resources repository.

Commands like /generate-spec and /implement-spec became /mop:generate-spec and /mop:implement-spec — standardized, versioned, and available across all projects from one source of truth. Agent rules, prompt patterns, and best practices in one place, maintained like code.

The repository isn't just a tool collection. It's institutional knowledge made durable.

The after picture is measurably different:

- A developer joining a new project finds the same commands, the same patterns, the same expectations

- Spec quality is reviewable because specs are findable

- The process around specs is as standardized as the specs themselves

We spent a lot of early energy improving what a good spec looked like. The bigger unlock was standardizing how specs got created, stored, and used. The artifact matters. The workflow around the artifact matters just as much.

At Ministry of Programming, we've seen this pattern repeatedly across client engagements: projects that started with a rigorous spec shipped with fewer change requests, fewer post-launch defects, and faster handoffs between teams. The upfront investment paid back in the middle of the project — exactly when it was most needed.

When SDD Shines — And When It Needs Calibration

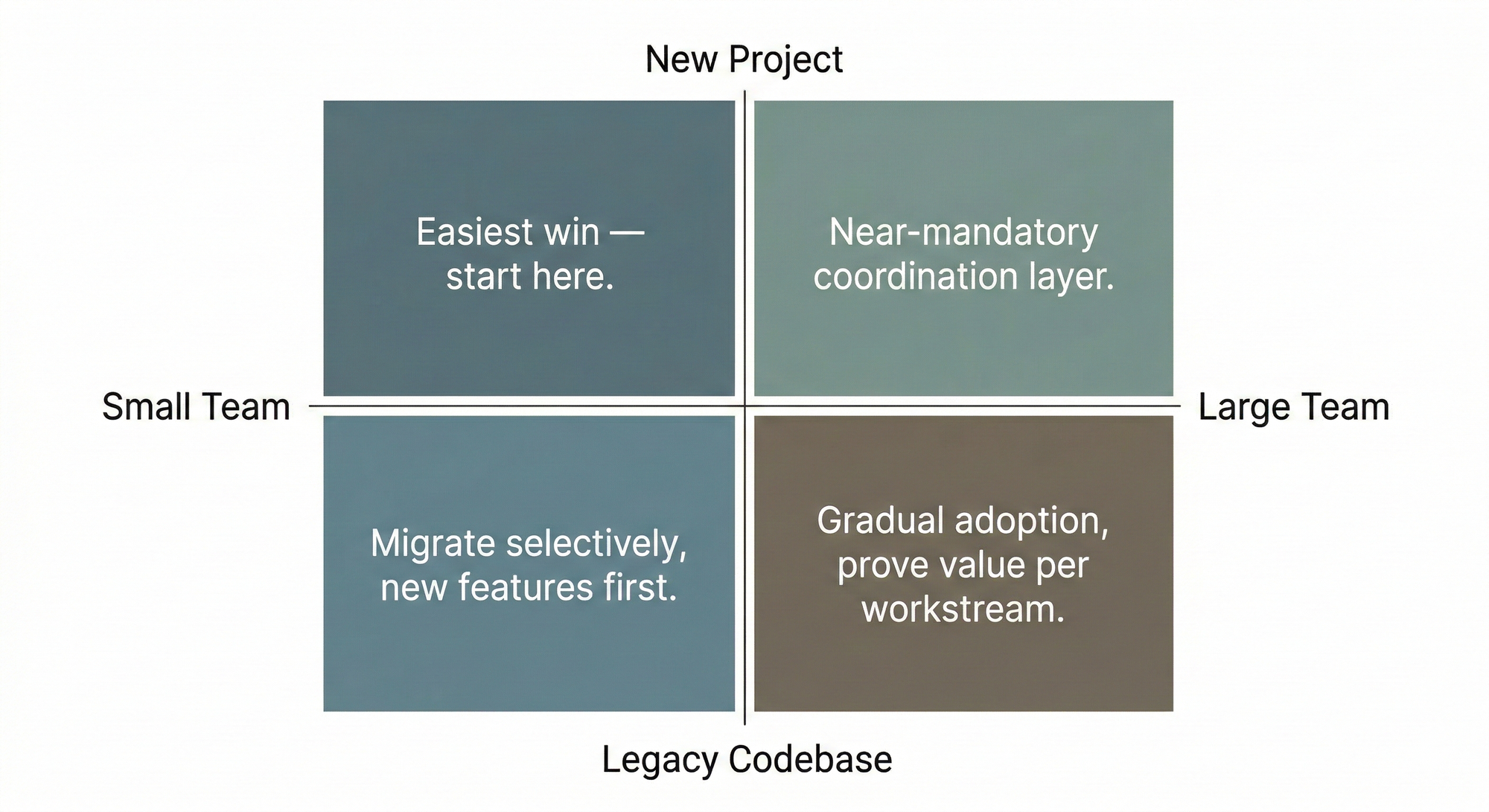

SDD isn't a one-size-fits-all. The approach is right; the implementation scales with context. Where you are — in terms of team size and codebase maturity — determines how aggressively you adopt it and where you start.

New project, small team. The easiest win. No existing debt, no legacy assumptions to untangle. Even a two-person team benefits from spec discipline before the chaos of early-stage building sets in. It forces you to say what you're actually building before you start arguing about how.

New project, larger team. Close to mandatory. When multiple engineers are working in parallel, the spec becomes the coordination layer — the thing that replaces a week of alignment meetings and prevents two people from building the same feature in opposite directions. Without it, workstreams diverge fast, and the cost of reconciling them compounds.

Legacy code, small team. Don't try to specify what already exists. Migrate selectively — start with new features and upcoming changes, not refactors of stable code. The goal is to establish the habit in low-risk territory before expanding it.

Legacy code, larger team. Legacy code, larger team. The hardest quadrant, and we know it from experience. Trying to retrofit SDD across an existing large codebase all at once is a morale and productivity drain. The only realistic path is gradual migration — project by project, team by team. The approach that works best is to start one layer below the spec: establish project-specific rules first. Linting configs, architecture constraints, naming conventions, review checklists — anything that makes the codebase's implicit expectations explicit. This does two things. It forces the team to surface and agree on assumptions that have been living in people's heads for months or years. And it builds the habit of working against a defined standard before the standard becomes a full specification.

Once that foundation is in place, the move to SDD feels like a natural next step rather than an imposed process change. The team is already asking "what does correct look like here?" before they write code — the spec just formalizes and extends that question.

The tool matters less than the habit. A shared doc template is a valid v1.

On tooling: we built /mop:generate-spec because our workflow needed it to fit our stack and our AI agent setup — that was a practical choice, not a philosophical one. Smaller teams shouldn't wait for the perfect tool. Start with whatever creates the least friction. A well-structured Google Doc that everyone actually reads beats a sophisticated spec system that no one uses consistently.

The pattern across all four scenarios is the same: match the adoption surface to your actual constraints, not to an idealized version of your team.

The Hard Questions, Answered Honestly

Every team we've introduced SDD to has pushed back. That's not a red flag — it's a sign the team is thinking. Here are the objections we hear most, and what we've actually found.

"Writing specs slows us down at the start."

Yes. That's the point. The slowdown is front-loaded — and it's the right trade. We've watched three-round PR review cycles, mid-sprint scope reversals, and "quick fixes" that took two weeks because nobody agreed on what the fix was supposed to do. The spec path is slower on day one and faster across the full delivery arc. That's not a theory — it's what the cycle time data shows when you measure end-to-end instead of just velocity-to-merge.

"AI can generate specs from requirements — do we still need to write them?"

AI can draft a spec. It cannot replace the thinking that goes into one. The value isn't the document — it's the forcing function. The moment where someone writes "users can filter by date" and then has to answer: Which timezone? What if there's no data in the range? Does it persist across sessions? That's where seven edge cases surface that nobody thought about. AI won't surface them for you. It'll confidently paper over them.

"What about fast-moving, exploratory work?"

A lightweight spec for a spike or prototype is still better than none. Define what you're trying to learn, what you'll build to learn it, and what done looks like. That's a spec. It takes 20 minutes and saves the conversation at the end of the sprint where nobody agrees on whether the experiment succeeded.

"Our existing codebase has no specs. Where do we start?"

Don't try to document what exists. Start speccing what you're about to build or change. The legacy code doesn't need a spec retroactively — the next PR touching it does. Coverage grows organically, and you never have to declare a documentation sprint that everyone resents.

"Is this just documentation by another name?"

No. They overlap, but they're not the same thing. The key difference is intent and timing. Documentation describes what was built. A spec prescribes what will be built.

The direction of the arrow matters. Spec first, code second — not the other way around.

If you're ready to turn your vision into a product that actually ships,

What We're Actually Asking Teams to Do

This isn't a mandate to adopt a new methodology or retool your entire delivery process. It's a much smaller ask: put the thinking earlier, and give it a form that survives the handoff.

That's what Spec Driven Development actually is. Not a framework imposed from above, but a discipline that acknowledges what most experienced engineers already know — the decisions that shape a feature are mostly made in the first few hours of working on it. SDD just makes those decisions legible, reviewable, and useful to the next person in the chain, including the AI tooling that's increasingly doing the work alongside us.

The starting point is practical:

- For new projects — use SDD from day one. The spec becomes the contract, and the implementation follows it.

- For existing projects — the next time you're about to start a feature, write the spec first. One feature. See what it changes.

- For tooling — the

/mop:generate-specand/mop:implement-speccommands are available now. Theagent-resourcesrepository is where you begin.

The spec isn't documentation you write after you understand the problem. It's how you come to understand the problem.

What teams typically find, once they try it, isn't that the spec slows them down. It's that the absence of a spec was already slowing them down — they just couldn't see it in the rework cycles, the misaligned reviews, the features that shipped and still missed the mark.

We've been building software long enough to know that most of what goes wrong in delivery was knowable before the first commit. SDD doesn't eliminate surprises — but it moves them to where they're cheap to resolve.

That's not a methodology. That's just good engineering.

reach out if you want to walk through what this looks like on your current projects.