Building Conversational Voice AI Agents with ElevenLabs: A Practical Guide to Customer Support Automation

A hands-on guide to building conversational Voice AI agents with ElevenLabs, based on real customer support automation experience in regulated environments.

Voice AI is rapidly transforming customer support. As organizations move beyond static, menu-based chatbots, conversational voice agents are emerging as a more natural and scalable interface for customer interaction. This article provides a practical guide to building, configuring, and deploying conversational Voice AI agents using ElevenLabs, drawing on hands-on experience from a banking-style prototype application called Ministry of Banking.

Rather than focusing on theoretical possibilities, the goal here is to share concrete lessons learned: what works in real implementations, where common pitfalls arise, and how to approach the unique challenges of voice-based AI systems.

Customer Support 2.0

Customer support is undergoing a fundamental shift. Traditional chatbots, often built on static decision trees, struggle to handle nuanced conversations or unexpected user behavior. In contrast, modern conversational AI agents are capable of understanding context, maintaining multi-turn dialogue, and executing real actions within applications.

ElevenLabs has emerged as a strong platform in this domain, offering high-quality text-to-speech and speech-to-text capabilities combined with conversational AI tooling. This article explores how those capabilities can be applied in practice, particularly in regulated and sensitive domains such as banking. The Ministry of Banking prototype serves as a reference point throughout, illustrating real-world constraints in terms of security, reliability, and user trust.

What ElevenLabs Provides

The ElevenLabs platform evolves quickly and offers a broad set of audio AI capabilities designed for production use. At its core are state-of-the-art text-to-speech and speech-to-text services supporting more than 30 languages, along with thousands of voices and accents. These capabilities are complemented by voice cloning, which enables organizations to create custom, brand-aligned voices. Most importantly, ElevenLabs offers building conversational AI agents that can be embedded directly into applications.

A centralized dashboard allows teams to configure agents, manage tools, monitor conversations, and review analytics. While the platform continues to mature, it already provides a solid foundation for building end-to-end voice-driven customer support experiences.

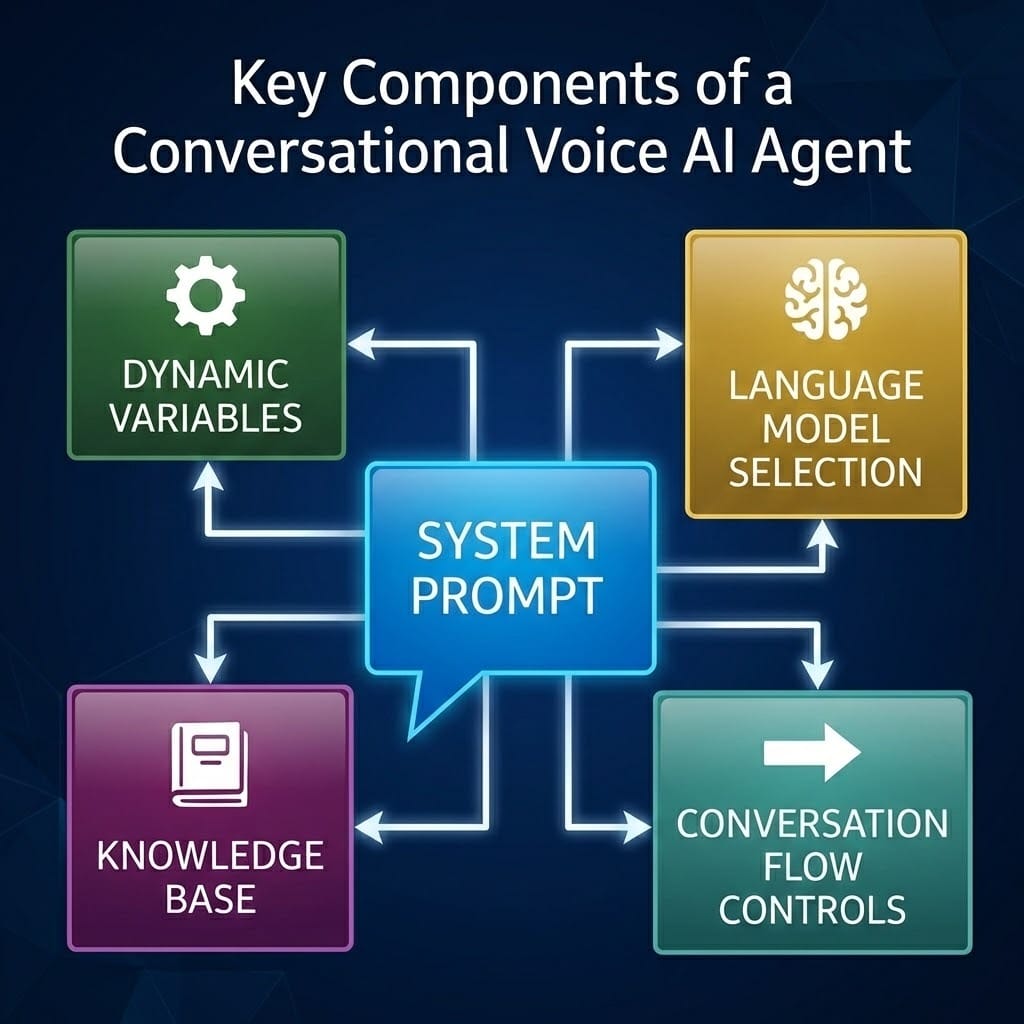

Key Components of a Conversational Voice AI Agent

A conversational agent built on ElevenLabs consists of several tightly coupled components that collectively define how the agent behaves and interacts with users.

At the center is the system prompt, which acts as the behavioral foundation of the agent. It defines personality, tone, goals, constraints, workflows, etc. In practice, there are two common approaches: a comprehensive prompt that captures all rules and logic in text, or a hybrid approach that combines textual instructions with visual workflow diagrams. The latter often improves clarity when reasoning about complex flows.

Language model selection plays a critical role in overall agent quality. For applications involving sensitive data or financial operations, higher-reasoning models such as Claude Sonnet 4.5 consistently outperform mid-tier alternatives. Although these models come with higher cost and latency, the trade-off is usually justified by more reliable instruction following, better tool usage, and improved handling of edge cases.

Conversation flow controls further shape the user experience. ElevenLabs allows fine-grained control over interruption behavior, silence handling, turn-taking, and automatic language detection. These settings are particularly important in voice interfaces, where timing and conversational rhythm strongly influence perceived quality.

To provide domain-specific knowledge, agents can be connected to a knowledge base and use retrieval-augmented generation to incorporate relevant information when responding. Uploading documents or linking URLs enables the agent to answer questions about products, policies, or procedures without embedding volatile information directly into prompts.

Finally, dynamic variables allow each session to be personalized at initialization time, enabling context-aware interactions tailored to the individual user.

Tool Integration: Turning Conversation into Action

Tools form the bridge between conversational intelligence and real application behavior. They allow agents not only to talk, but to act.

Client-side tools are used to trigger frontend behavior such as navigation, UI state changes, or user notifications. These tools are especially useful when the agent is guiding users through an interface while maintaining conversational continuity.

Server-side tools, implemented as webhooks, connect the agent directly to backend systems. Through these tools, an agent can create or modify database records, perform CRUD operations, integrate with third-party services, or trigger workflows such as account creation, card blocking, transaction processing, or email delivery through services like Resend. Server-side tools also include integration tools, which leverage ElevenLabs built-in connections to platforms such as Zendesk, Cal.com, and Twilio, enabling the agent to interact seamlessly with these services and perform actions.

In addition, ElevenLabs provides system-level tools that operate entirely within the conversational context. These tools can end conversations, detect language changes, transfer the user to another agent, or escalate to a human operator without making external API calls.

Experience shows that tool reliability depends heavily on prompt quality. While ElevenLabs provides a dedicated interface for defining tools, writing critical tool descriptions inside the system prompt significantly improves consistency. Effective tool definitions clearly specify usage conditions, parameter expectations with examples, error-handling behavior, and expected response formats.

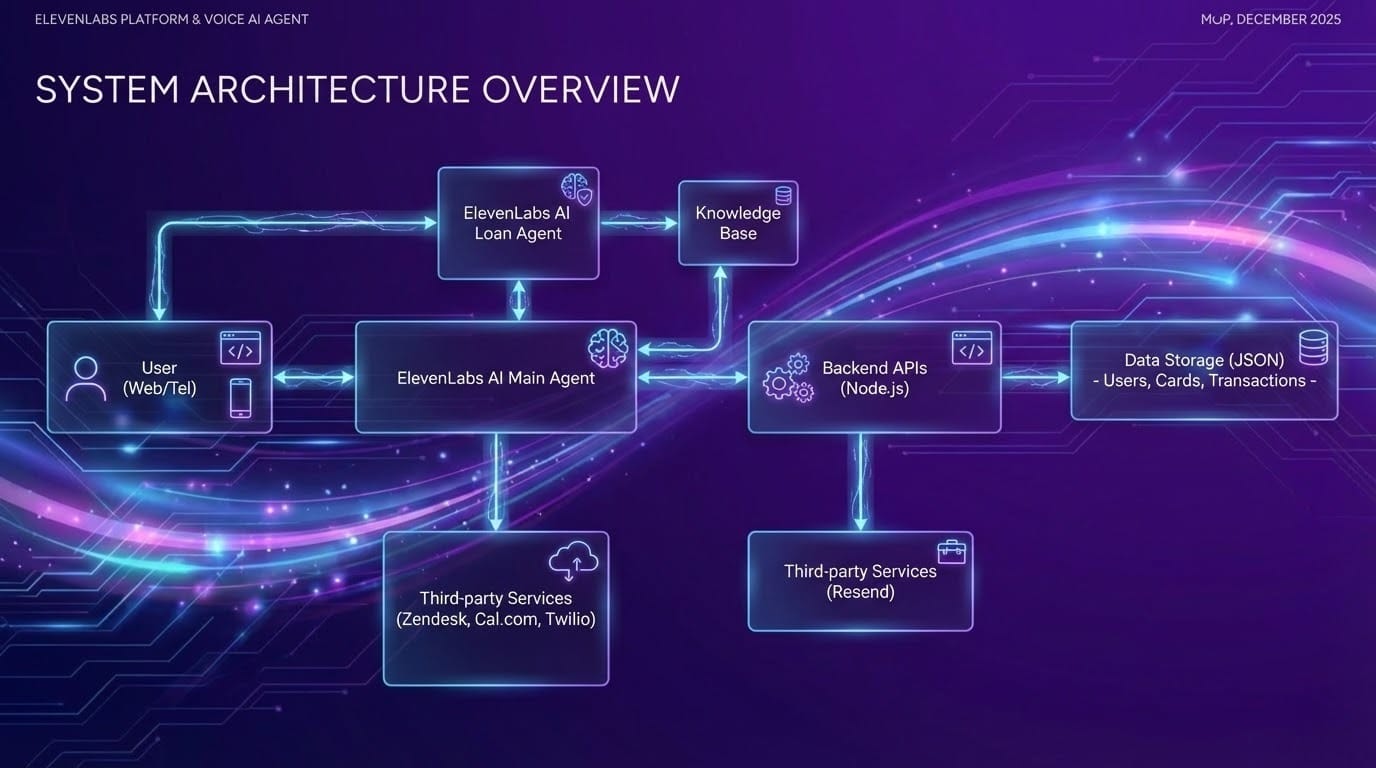

System Architecture for Voice AI Customer Support

A production-ready Voice AI system is rarely a single agent operating in isolation. In practice, it consists of multiple integrated components working together.

One effective pattern is multi-agent orchestration. In complex domains, a primary agent handles general queries and actions, and routes specialized topics, such as loan applications or fraud incidents, to dedicated sub-agents with narrower knowledge and stricter guardrails. Workflow-based orchestration offers a practical and reliable way to manage agent handoffs when more control over the process is desired, though built-in transfer mechanisms are also available for standard scenarios.

Security considerations are central in customer support scenarios. Identity verification is a prerequisite before accessing sensitive data or performing any actions. In the banking prototype, email-based OTP verification was used to confirm user identity before proceeding with protected operations.

Prompt Engineering for Voice AI

Prompt engineering for voice agents differs fundamentally from prompt design in text-only systems. Spoken language introduces ambiguity, timing challenges, and interpretation issues that do not exist in written interactions.

Effective prompts are structured into clear, well-defined sections. These typically cover personality and tone, explicit goals, non-negotiable guardrails, conversation flow rules, tool usage instructions, error recovery strategies, and data normalization requirements. The structure helps reduce misinterpretation and makes the prompt easier to understand and maintain.

Conciseness and completeness must be carefully balanced. Every relevant edge case should be addressed, but unnecessary verbosity can lead to unexpected behavior. Even small phrasing choices can have outsized effects. For example, an instruction such as “Offer 2-3 options” may be misinterpreted as “skip the first option and present the second and third,” rather than “offer two to three options.” Precision in language is critical.

Data Normalization in Voice Interfaces

Voice agents do not inherently understand how humans expect numbers, dates, or formatted strings to be spoken. Without explicit instructions, the agent may produce inconsistent or even unsafe output. Email addresses may be read literally, monetary amounts may be phrased incorrectly, or masked card numbers may be read inappropriately. OTP codes are particularly sensitive and must be treated as a continuous sequence without pauses and special characters.

Defining explicit normalization rules in prompts is essential to ensure that spoken output is consistent, secure, and user-friendly, especially when handling sensitive information.

Iterative Testing and Refinement

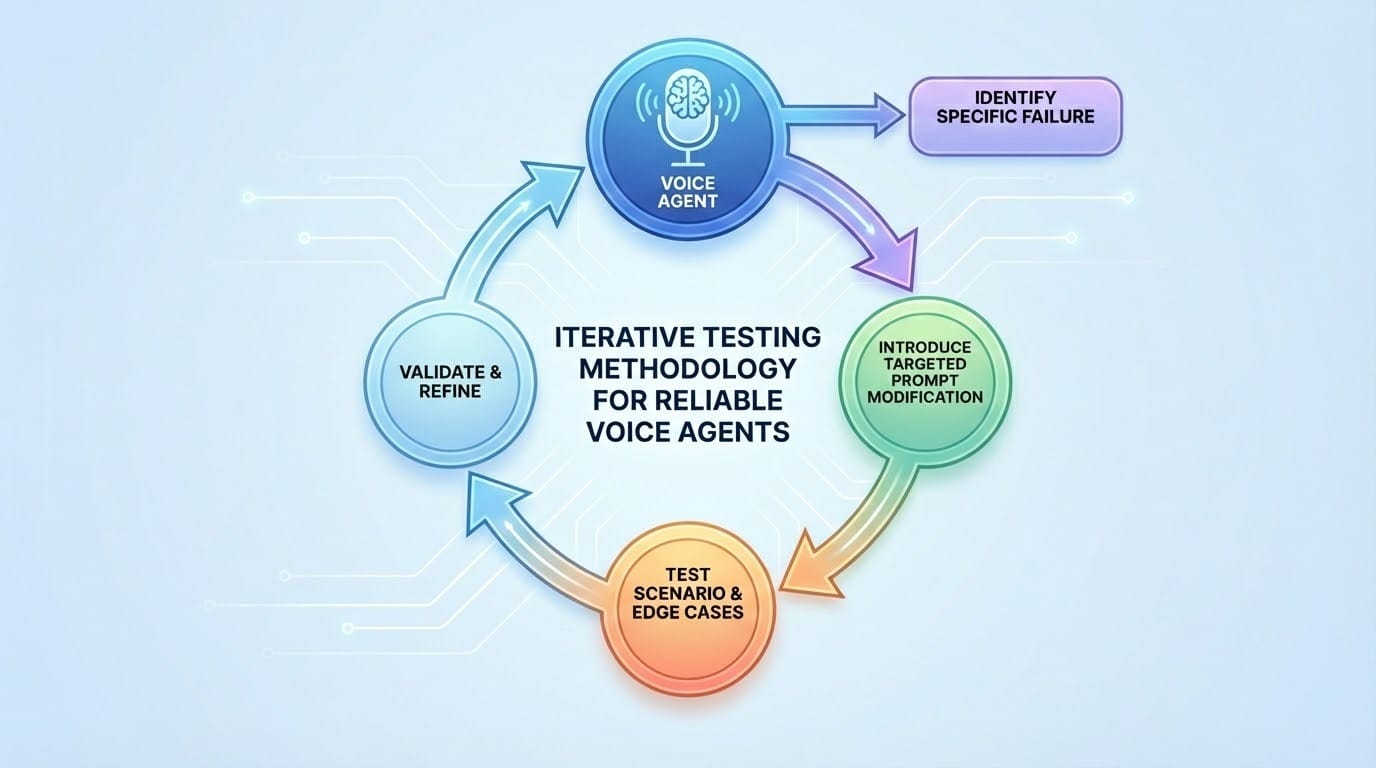

Developing a reliable voice agent requires an iterative testing methodology. The recommended approach is to identify a specific failure, introduce a targeted prompt modification, and test the same scenario multiple times - since, due to the probabilistic nature of LLMs and AI, identical inputs can yield different outputs across conversations. Related scenarios and edge cases should then be validated before moving on. Therefore, the agent is being gradually refined through targeted, iterative prompt improvements, with the prompt guiding the agent toward correct behavior.

Single-change iterations are strongly advised. Making multiple changes simultaneously makes it difficult to identify which modification caused a behavioral shift.

Real-World Application Potential

Conversational Voice AI is particularly effective in scenarios that combine structure (predictable patterns or workflows) with scale (high-volume or repeated interactions). These include repetitive inquiries such as guiding users through their account details or FAQs, structured interactions like collecting information via forms or surveys, and use cases requiring 24/7 availability across time zones. Voice agents are highly effective where consistent information delivery is critical and human error must be minimized.

Practical applications of voice agents are diverse. They can include shopping platform support, banking support and card management, automated survey calls, or tablet-based restaurant ordering systems that handle menu questions, dietary preferences, and order placement - and these are just a few examples.

Banking Prototype Test Cases

To validate robustness, a series of realistic banking scenarios were designed and executed against the prototype agent.

In a single comprehensive scenario, the user inquired about active cards, reported a lost card, explored loan options, reported an app freezing issue, and flagged an incorrect transaction. The agent verified the user’s identity, blocked and replaced the card, scheduled a credit consultation, resolved the app-freezing problem, created a transaction dispute, opened a support ticket, and concluded the call naturally.

Another scenario focused on a suspected security incident. After verifying identity via OTP, account number, and date of birth, the agent froze the account and created an urgent fraud ticket, informing the user of next steps.

Additional scenarios covered failed verification attempts, account creation with repeated OTP failures, deliberate trolling behavior, and a multi-language interaction involving Croatian and English, agent transfers, and knowledge base usage.

Collectively, these tests demonstrated the agent’s ability to maintain security, adapt to user behavior, and deliver a coherent conversational experience across languages and complexity levels.

Key Takeaways

- Language model selection is critical: high-reasoning models justify their cost in complex or sensitive domains.

- Prompt structure matters: clearly organized sections and explicit guardrails outperform unstructured instructions.

- Nothing can be assumed - every expected behavior must be tested and, if necessary, explicitly defined in prompt. Testing should be iterative and disciplined, with single-change modifications validated across scenarios.

- Finally, important tools should be documented not only in configuration interfaces, but directly within prompts to ensure consistent usage.

Conclusion

Building conversational Voice AI agents represents a fundamental shift in how software can interact with users. The primary challenge is not code complexity, but the translation of deterministic business logic into probabilistic natural language instructions.

Unlike traditional software, where code executes exactly as written, Voice AI development requires embracing uncertainty while carefully designing guardrails that guide behavior toward desired outcomes. ElevenLabs provides powerful capabilities to support this approach, though the platform continues to evolve. The learning curve is steep, but real-world applications can deliver significant value when designed thoughtfully.

Conversational Voice AI works best when it’s designed for real users, real constraints, and real systems, not just impressive demos.